Starlink has a catastrophic architectural failure which is a shining example of why Agile can never be allowed even as close as the parking lot to any project of any size. If you attended a so-called University and they taught you Agile, you attended a Trump University and should sue to get your money back. Absolutely no business should recognize your degree. Agile is just hacking on the fly until the money runs out. Starlink … Starlink – Catastrophic Architectural FailureRead more

Agile

Not All Movement is Forward

If you have never had to endure software development run by MBAs, you probably don’t hate the word movement. Decades ago, when I was contracting at Caremark between two of their bigger Medicare fraud convictions. Not their current record fraud, between other records. Look it up, they’ve got a lot of them. You also have to look up MedPartners, but I digress. Yes, this is a follow-up post to my Fedora 43 post of this … Not All Movement is ForwardRead more

Ubuntu F’ed Networking Again!

I’ve lost count of the number of times Ubuntu F’ed Networking over the course of my career. Was that the 2008 edition when the ISO ran great, it installed, and after it installed updates, all wifi using Broadcom chips ceased to work? It was great! They were used in something like 80+% of all laptops and I don’t know how many of those USB Wifi dongles. Ubuntu just can’t be used for anything that matters. … Ubuntu F’ed Networking Again!Read more

ID.me Duth Sucketh

ID.me is a shining example of why Agile is not a valid software development methodology. It is also a shining example of Elon Musk and DOGE’s limitless incompetence. One should expect nothing less from someone who not only builds the ugliest vehicle ever made in any country, he also makes it so poorly body parts fall off. These imbeciles want you to use a “smart” phone app to control access to your irs.gov account. Oh, … ID.me Duth SuckethRead more

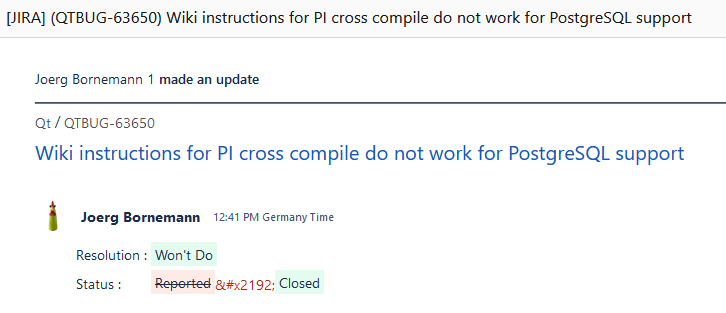

How Qt Bugs Get Fixed

Ho Ho Ho, Merry Christmas. Just let bad shit keep being bad shit. Agile is not Software Engineering. The email says it all. Happy bug riddled holidays.